The mandate of modern Platform Engineering is to reduce cognitive load. When a software engineer wants to deploy a microservice, the Internal Developer Platform (IDP) should provide a “Golden Path” that abstracts away the underlying Kubernetes plumbing.

However, the explosive rise of Generative AI has broken this abstraction.

Today, if a Machine Learning (ML) engineer wants to deploy a Large Language Model (LLM) using an inference engine like vLLM or NVIDIA Triton, they are suddenly forced to become infrastructure experts. They quickly discover that standard Horizontal Pod Autoscaler (HPA) CPU and memory metrics are useless for AI workloads. A node’s CPU might sit idle at 10% while the physical GPU is 100% saturated with a massive queue of pending tensor operations.

To scale these workloads, platform teams typically force ML engineers to navigate a labyrinth of infrastructure: deploying dcgm-exporter, configuring Prometheus scraping intervals, and writing complex PromQL queries to feed into KEDA (Kubernetes Event-driven Autoscaling).

This is not a Golden Path. It is an operational anti-pattern. To fix the Developer Experience (DevEx) for AI workloads, we must push the complexity down the stack.

The Prometheus Latency Trap

Relying on a centralized observability stack to drive real-time scaling decisions introduces critical architectural flaws:

- The Latency Window: Prometheus typically scrapes metrics every 15 to 30 seconds. In the world of LLM inference, a 30-second delay in scaling a deployment to handle a traffic spike results in severe latency degradation and dropped tokens for the end-user.

- The Observability Tax: Centralized metric servers become a massive single point of failure and bottleneck when scraping high-fidelity GPU telemetry across hundreds of nodes.

- Cognitive Load: Data scientists should be tuning hyperparameters, not writing PromQL queries to calculate Streaming Multiprocessor (SM) utilization.

The Solution: Edge-Native Hardware Telemetry

To build a true self-service AI platform, the scaling mechanism must be native, real-time, and completely transparent to the end-user.

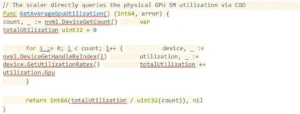

To solve this, I built the keda-gpu-scaler, an open-source, high-performance KEDA External Scaler that completely bypasses Prometheus and interrogates the physical NVIDIA hardware directly.

Architectural Deep Dive: The NVML DaemonSet

Because the central KEDA operator lives on the Kubernetes control plane, it cannot execute local C-bindings to read hardware states across a distributed cluster.

The keda-gpu-scaler solves this by utilizing an edge-compute architecture. It is deployed as a lightweight DaemonSet strictly targeted at GPU-provisioned nodes.

Instead of waiting for a metric exporter to format data for a central server, the scaler uses go-nvml (NVIDIA Management Library C-bindings) to read the raw hardware telemetry directly from the host machine’s device drivers in real-time.

The DaemonSet exposes an externalscaler.ExternalScalerServer gRPC interface. When KEDA needs to evaluate if an LLM deployment requires scaling, it queries the DaemonSet directly over the local cluster network.

Restoring the Golden Path

By implementing this architecture, the Platform Engineering team absorbs the infrastructure complexity. The ML engineer is no longer required to understand PromQL or cluster observability pipelines.

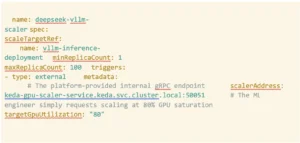

To scale their massive foundational models, the ML engineer simply attaches a clean, declarative ScaledObject to their deployment. The target threshold is defined as a simple percentage of hardware saturation:

The Future of AI Platforms

Building a successful Internal Developer Platform is about providing powerful primitives with simple interfaces. As enterprise clusters become increasingly heterogeneous—mixing standard CPUs, GPUs, and TPUs—our autoscaling mechanisms must become hardware-aware at the edge of the network.

By eliminating the Prometheus middleman, the keda-gpu-scaler reduces scaling latency to near-zero and restores the DevEx for AI infrastructure teams.

If you are building an AI platform and fighting the CPU-scaling bottleneck, the code is open-source. I welcome contributions, issues, and PRs as we build the next generation of hardware-aware Kubernetes scaling.