When the platforms we use to build platforms start breaking, it’s time to pay attention.

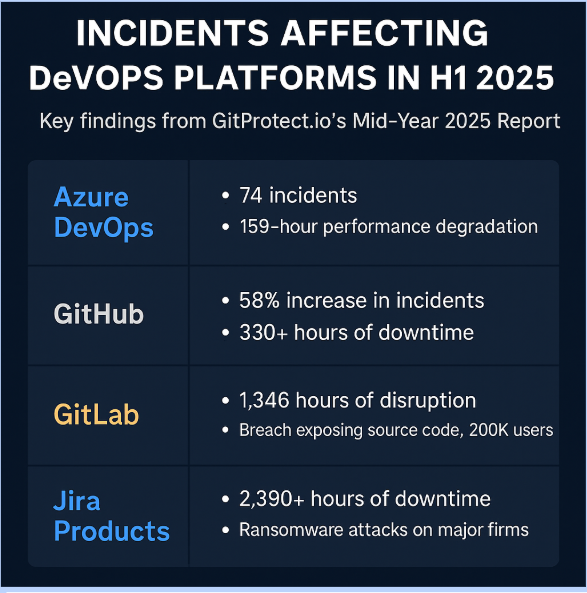

GitProtect.io’s DevOps Threats Unwrapped: Mid-Year Report 2025 shines a spotlight on something platform engineering teams can’t afford to ignore: The core tools we’ve abstracted into our internal developer platforms (IDPs) — GitHub, GitLab, Azure DevOps, Jira — are increasingly unstable, increasingly targeted and increasingly putting the resilience of our platforms at risk.

In just the first six months of 2025, there were 330 recorded incidents across these major DevOps platforms. We’re not just talking about momentary blips or planned maintenance. We’re talking about hours — and in some cases, days — of degraded performance, critical outages, and cybersecurity breaches that impact developer productivity, automation and trust.

This isn’t a Git problem. It’s not a CI problem. It’s a platform reliability problem.

GitHub’s 58% Incident Spike is Your Platform’s Problem

GitHub had 109 incidents in H1 2025 — up 58% from the same period in 2024. That’s not just noise. For platform engineers who’ve designed IDPs with GitHub as the single source of truth, this is a resilience red flag.

If your golden path assumes GitHub is “always up,” your platform just got more brittle.

- 17 of the incidents were major, with over 100 hours of impact

- April saw 330 hours of downtime, affecting everything from repo sync to GitHub Actions

- If you’re running GitOps pipelines (Argo CD, Flux, Jenkins X), your automation likely broke silently

As platform teams push toward standardized workflows and Git-based approvals, even a minor disruption becomes a bottleneck for every developer using the platform.

Azure DevOps Pipelines Degraded for 159 Hours

Microsoft’s Azure DevOps platform recorded 74 unique incidents, with Pipelines disrupted 31 times — the most of any service on the platform.

The worst event? A 159-hour global performance degradation in January that all but paralyzed build and deploy processes.

Let that sink in: If your IDP is routing builds through Azure Pipelines, you lost nearly a week of reliable delivery capability.

This isn’t just a service-level concern — it’s an architectural one. If your platform is tightly coupled to a single provider’s CI/CD stack, how resilient is your golden path, really?

GitLab’s 1,346 Hours of Disruption + Source Code Breach

GitLab experienced 59 incidents totaling over 1,346 hours of disruption in H1 2025. But downtime wasn’t the only concern. The Europcar Mobility Group breach revealed just how exposed some Git-based workflows can be:

- Attackers stole GitLab repo data and customer PII

- Source code from Android and iOS apps was exfiltrated

- 200,000+ users were impacted

Platform teams that rely on GitLab for pipelines, issue tracking and storage must now contend with a new responsibility: integrating zero-trust principles and data recovery capabilities directly into their platform’s SDLC experience.

Jira: 100 Days of Downtime and a Ransomware Wake-Up Call

Jira, Jira Service Management and other Atlassian products logged 66 incidents in H1 2025, totaling over 2,390 hours of downtime. Free-tier users in Singapore and Northern California were among the hardest hit, but the real eye-opener came in the form of ransomware.

The HellCat group targeted Jira environments across multiple global enterprises, compromising credentials and locking teams out of their own issue management systems.

For platform engineers building integrated feedback loops between IDPs and Jira, this highlights an often-overlooked truth: incident tracking tools are part of your reliability surface area.

Platform Engineering = Resilience Engineering

GitProtect’s report underscores a truth we already know, but often don’t design for: Platforms fail. And when the tools we’ve abstracted into our platforms fail, everything else breaks with them.

So what do we do?

Platform engineering teams must:

- Decouple golden paths from single-vendor assumptions

- Design fallback strategies for source control, CI/CD, and issue tracking

- Treat third-party dependencies as failure domains

- Incorporate backup, auditability, and DR into the developer experience

- Embed security and observability controls natively into the platform

We spend a lot of time refining golden paths. But what GitProtect’s data tells us is that we also need resilient exit ramps, redundant control planes, and real-time awareness of upstream provider instability.

From GitOps to PlatformOps: Expanding the Resilience Mandate

As we move toward PlatformOps maturity, where self-service portals, composable pipelines, and developer productivity dashboards are standard, the risk of invisible fragility grows. What happens when your Git provider is down but the developer doesn’t know it? What happens when a build silently fails due to upstream rate limiting?

This is where resilience shifts from being a platform feature to a platform principle.

As GitProtect’s Greg Bak puts it:

“Anticipating failures before they happen, paired with self-healing infrastructure and recovery strategies that go beyond just technology, will redefine how organizations safeguard uptime, data integrity and business continuity.”

Conclusion: The Platforms You Build On are Now Part of Your Platform

Platform engineering is about abstraction — but also about accountability. When the DevOps stack starts to crack, it’s the platform team that has to absorb the blow, reroute traffic and maintain trust with devs.

The lesson from this report is clear: Platform teams must treat upstream services as failure-prone nodes, not infallible black boxes.

👁️🗨️ Review your assumptions.

⚙️ Audit your integrations.

🛠️ Engineer for failure.

Because in 2025, the platforms we build on aren’t just enabling DevOps, they’re exposing the limits of platform resilience.

📖 Read GitProtect’s full report